In September 2021, the Wall Street Journal published a series of internal Facebook reports, now known as The Facebook Files, that show Facebook knew its negative impact on teenagers’ mental health, societal polarization, misinformation, and more.

The revelations from a woman employee, Frances Hauger, who appeared on 60 Minutes October 3, 2021, put in even greater light the larger, systemic harms that Facebook — and other persuasive technology giants — are able to have on society.

Big Tech back lash has been ranging worldwide for years, but less so in tranquil Canada. The US-based Center for Humane Tech, instigator of the groundbreaking Social Dilemma movie, is calling for policies to make Big Tech beneficial to our society. We call for similar action in Canada to finally protect children and our human rights to privacy and constitutional freedom.

Governments can’t easily regulate Tech. Try Taxing Their Divisive Impact on Mental Health & Democracy

The genie is out of the bottle and, unlike in China, Western governments will not impose strict curfews or limits on use of digital media. Legislation like The Children’s Code in the UK or revision to the 1998 COPPA child protection law in the US are great first steps but, like the European GDPR, implementation will be tricky.

With the Covid crisis, the Canadian federal government has the opportunity to tax, then take the time to legislate and regulate Facebook (and its WhatsApp and Instagram properties) and Tech giants like TikTok, Google, and YouTube that benefitted from the crisis.

Caroline Isautier, our director, had already called out for quick action like taxing Big Tech’s divisive impact in May 2019, here, after the Christchurch killing. More here:

At a provincial level, we’ve been advocating for schools to incorporate digital citizenship in their curriculum to protect children from the online harms of addictive, violent, divisive and hyper sexualized content Big Tech targets at them, much as schools educate on cyberbullying.

October 5th update: All Facebook properties were down the Monday following the 60 Minutes interview of whistleblower Frances Hauger. Even employees could not enter the system or the company buildings. Is this a coincidence or a convenient way to stop the spread of fury against Facebook’s actions? Others say, it may be a way to destroy evidence, as Facebook faces lawsuits. Here’s our 7 part Twitter recap.

From The Wall Street Journal: The links behind each heading are accessible behind their usual paywall, courtesy of Tech for Good Canada.

01 Facebook Says Its Rules Apply to All. Company Documents Reveal a Secret Elite That’s Exempt

Click above for full article – By Jeff Horwitz

Mark Zuckerberg has said Facebook allows its users to speak on equal footing with the elites of politics, culture and journalism, and that its standards apply to everyone. In private, the company has built a system that has exempted high-profile users from some or all of its rules. The program, known as “cross check” or “XCheck,” was intended as a quality-control measure for high-profile accounts. Today, it shields millions of VIPs from the company’s normal enforcement, the documents show. Many abuse the privilege, posting material including harassment and incitement to violence that would typically lead to sanctions. Facebook says criticism of the program is fair, that it was designed for a good purpose and that the company is working to fix it. (Listen to a related podcast.)

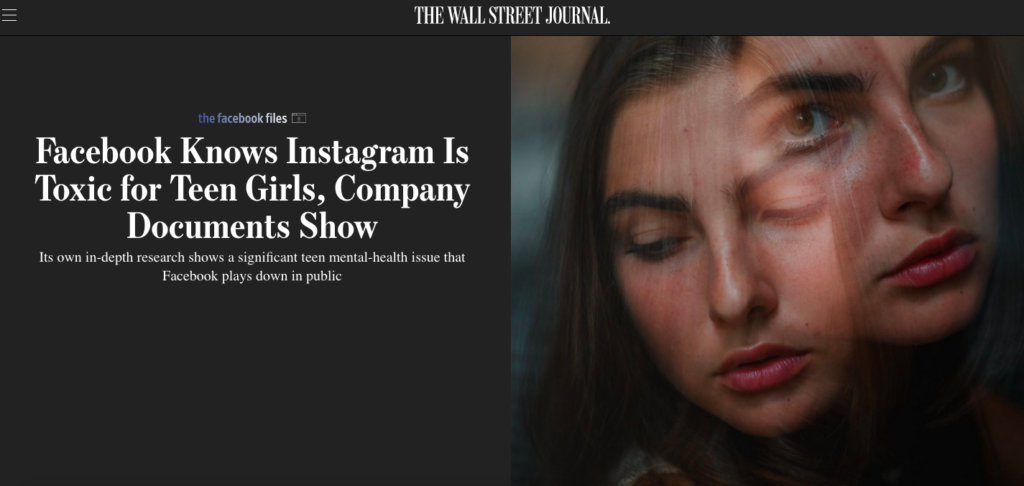

_02 Facebook Knows Instagram Is Toxic for Many Teen Girls, Company Documents Show

Click above for full article – By Georgia Wells, Jeff Horwitz and Deepa Seetharaman – Our subscriber link should allow you access

Researchers inside Instagram, which is owned by Facebook, have been studying for years how its photo-sharing app affects millions of young users. Repeatedly, the company found that Instagram is harmful for a sizable percentage of them, most notably teenage girls, more so than other social-media platforms. In public, Facebook has consistently played down the app’s negative effects, including in comments to Congress, and hasn’t made its research public or available to academics or lawmakers who have asked for it. In response, Facebook says the negative effects aren’t widespread, that the mental-health research is valuable and that some of the harmful aspects aren’t easy to address. Read all on Instagram toxicity on Teenage girls body image, courtesy of Tech for Good Canada, or listen to a related podcast.

_03 Facebook Tried to Make Its Platform a Healthier Place. It Got Angrier Instead.

Click above for full article- By Keach Hagey and Jeff Horwitz – Our subscriber link should allow you access.

Facebook made a heralded change to its algorithm in 2018 designed to improve its platform—and arrest signs of declining user engagement. Mr. Zuckerberg declared his aim was to strengthen bonds between users and improve their well-being by fostering interactions between friends and family. Within the company, the documents show, staffers warned the change was having the opposite effect. It was making Facebook, and those who used it, angrier. Mr. Zuckerberg resisted some fixes proposed by his team, the documents show, because he worried they would lead people to interact with Facebook less. Facebook, in response, says any algorithm can promote objectionable or harmful content and that the company is doing its best to mitigate the problem. Read how divisive Facebook is designed to be here, courtesy of Tech for Good Canada, or listen to a related podcast.

_04 Facebook Employees Flag Drug Cartels and Human Traffickers. The Company’s Response Is Weak, Documents Show.

Click above for full article. By Justin Scheck, Newley Purnell and Jeff Horwitz

Scores of Facebook documents reviewed by The Wall Street Journal show employees raising alarms about how its platforms are used in developing countries, where its user base is huge and expanding. Employees flagged that human traffickers in the Middle East used the site to lure women into abusive employment situations. They warned that armed groups in Ethiopia used the site to incite violence against ethnic minorities. They sent alerts to their bosses about organ selling, pornography and government action against political dissent, according to the documents. They also show the company’s response, which in many instances is inadequate or nothing at all. Many of us have heard or witnessed this absent reaction to major human rights violations, including the Canadian Center for Child Protection. A Facebook spokesman said the company has deployed global teams, local partnerships and third-party fact checkers to keep users safe. (Listen to a related podcast.)

_05 How Facebook Hobbled Mark Zuckerberg’s Bid to Get America Vaccinated

Click above for full article. By Sam Schechner, Jeff Horwitz and Emily Glazer

Facebook threw its weight behind promoting Covid-19 vaccines—“a top company priority,” one memo said—in a demonstration of Mr. Zuckerberg’s faith that his creation is a force for social good in the world. It ended up demonstrating the gulf between his aspirations and the reality of the world’s largest social platform. Activists flooded the network with what Facebook calls “barrier to vaccination” content, the internal memos show. They used Facebook’s own tools to sow doubt about the severity of the pandemic’s threat and the safety of authorities’ main weapon to combat it. The Covid-19 problems make it uncomfortably clear: Even when he set a goal, the chief executive couldn’t steer the platform as he wanted. A Facebook spokesman said in a statement that the data shows vaccine hesitancy for people in the U.S. on Facebook has declined by about 50% since January, and that the documents show the company’s “routine process for dealing with difficult challenges.”

_06 Facebook’s Effort to Attract Preteens Goes Beyond Instagram Kids, Documents Show

Click above for full article. By Georgia Wells and Jeff Horwitz

Facebook has come under increasing fire in recent days for its effect on young users. Inside the company, teams of employees have for years been laying plans to attract preteens that go beyond what is publicly known, spurred by fear that it could lose a wave of users critical to its future. “Why do we care about tweens?” said one document from 2020. “They are a valuable but untapped audience.” Adam Mosseri, head of Instagram, said Facebook is not recruiting people too young to use its apps—the current age limit is 13—but is instead trying to understand how teens and preteens use technology and to appeal to the next generation. (Listen to a related podcast.)

_07 Facebook’s Documents About Instagram and Teens, Published

By Wall Street Journal Staff

A Senate Commerce Committee hearing about Facebook, teens and mental health was prompted by a mid-September article in The Wall Street Journal. Based on internal company documents, it detailed Facebook’s internal research on the negative impact of its Instagram app on teen girls and others. Six of the documents that formed the basis of the Instagram article are published here.