Governments around the world are crafting or attempt to craft laws to protect children online. We look at online child protection legislation and proposed legislation in France, the UK, California, Canada, Australia and the US below since 2018.

As we discuss in our remote conferences/workshops for parents on digital parenting and our student workshops on digital media and security, democracy and mental health, the video games, social networks, websites and applications that have populated the cultural universe of children and teenagers for the past 25 years were not designed to protect them. On the contrary, these digital media and communication tools have been designed by Tech companies, with no child protection specialists or editors.

Here is an inventory of past or current legislation designed to protect children online around the world in 2022:

Europe has been ahead of North America on web, app and social network regulation but California just scored big with a law protecting children online.

February 2018: France’s Digital Majority at Age 15 Law

French law on digital majority at 15 years old (French)

In France, it will now be considered that a 15 year old is the owner of his data and image. Under and up to the age of 15 to register on Snapchat, Instagram, or Twitter, it will require a double consent: that of the child and his guardians. The information about the use of this data will be written in terms that the child can understand. This will be mandatory. On the other hand, for children under the age of 13, it will be an outright ban.

The initial version of the French bill foresaw 16 years, the age recommended by the European Commission. But setting the age of majority at 15 corresponds to “a desire to harmonize French law,” explains Paula Forteza, the En Marche deputy who is the rapporteur for the text. The age of sexual majority is set at 15, which is also the age when a minor’s health data can be taken into account by surveys.

Is this law enforced today? Nothing is less certain.

September 2021: UK Online Child Protection Act

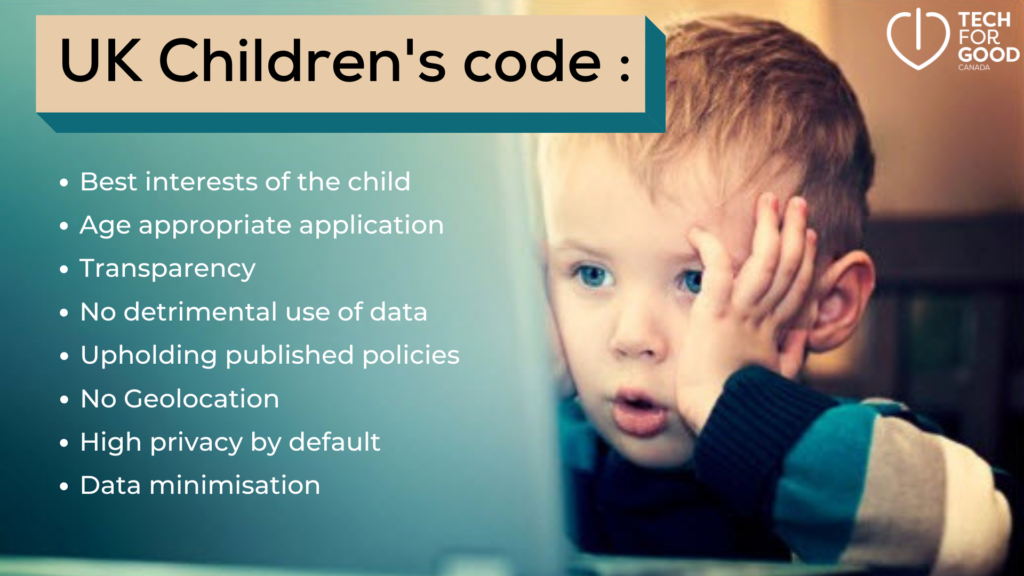

The UK Children’s Code or Age-Appropriate Design Code is the first law in the world promoting child-centric design of technology. It is the brainchild of Baroness Beeban Kidron, a UK filmmaker and Lord turned advocate for children’s rights online. She founded the 5Rights Foundation in 2013 to build a safe and positive digital environment for children.

Some of the basic principles are:

- Best interests of the child : Services used by children should be designed with their best interest in mind, versus maximizing corporate profits.

- Age appropriate application: companies should assess whether the content in the online services is appropriate for children of various ages.

- Transparency: Privacy information should be understandable by a child.

- Detrimental use of data: Data collectd should not be detrimental to children’s well-being.

- Upholding published policies and guidelines: Companies should uphold their stated policies and guidelines.

- Geolocation: This should be turned off for children by default.

- Privacy settings: They should be on the highest level for children by default.

- Data minimisation: Apply the GDPR principle of only asking for data required to run the service offered, no more.

Breaches of children’s online rights :

5Rights indicated in a letter to the British ICO ( Information Commissioner’s Office) in November 2021 where it saw as many as twelve breaches of the age-appropriate design code. These are all too familiar to me and include:

- insufficient age assurance, ie, age is not truly verified to access a service

- non enforcement of community guidelines

- mis-advertisement of age appropriateness in app stores

- use of dark patterns and budges

- age-inappropriate financial pressures

- low default privacy setting

- inadequate sharing of children’s data ith third parties

- terms of service are not understandable by children

- insufficient tools to exercise data rights for children

- unprotected connected devices

Penalties for not abiding by the UK Age Appropriate Design Code

Those found to be in breach of the code will be subject to the same potential penalties as those who fail to abide by the European Union’s General Data Protection Regulation ( GDPR) which include a fine of up to 4% of global revenue.

As with GDPR, there will be support rather than penalties at first – but the UK’s Information Commissioner has the power to investigate or audit organisations it believes are not complying. As of this writing, there have been no resounding fines or audits.

May 2022: The 5Rights Foundation launches an online safety toolkit for children:

See the Child Online Safety Toolkit by 5Rights Foundation.

November 2021: Canadian Youth Access to Pornography Bill ( S-210)

Senator Julie Miville-Dechêne’s bill limiting online access to sexually explicit material by youth, bill S-210.

This enactment makes it an offence for organizations to make sexually explicit material available to young persons on the Internet. It also enables a designated enforcement authority to take steps to prevent sexually explicit material from being made available to young persons on the Internet in Canada.

Making sexually explicit material available to a young person

5 Any organization that, for commercial purposes, makes available sexually explicit material on the Internet to a young person is guilty of an offence punishable on summary conviction and is liable,

- (a) for a first offence, to a fine of not more than $CA 250,000; and

- (b) for a second or subsequent offence, to a fine of not more than $CA 500,000.

Defence — age verification

6 (1) It is not a defence to a charge under section 5 that the organization believed that the young person referred to in that section was at least 18 years of age unless the organization implemented a prescribed age-verification method to limit access to the sexually explicit material made available for commercial purposes to individuals who are at least 18 years of age.

February 2022: Proposed U.S. Child Online Protection Laws

Two bills are moving through the legislative process: the Kids Online Safety Act (KOSA) and the Children and Teens Online Privacy Protection Act upgrade (COPPA 2.0).

About KOSA: Blumenthal/Blackburn proposed Kids online safety legislation

About COPPA 2.0: Senators Markey (D-Mass.) and Cassidy (R-La.) sponsor the Children and Teens’ Online Privacy Protection Act, legislation to strengthen protections for minors online.

The proposals follow resounding senate hearings of TikTok, SnapChat and YouTube executives over their lack of youth protection in October 2021.

March 2022: President Biden accuses Social Media of endangering the mental health of America’s youth

From Biden’s State of the Union speech on March 1, 2022, on his desire to prevent social media from harming the mental well-being of American children and teens.

August 2022: California Bill Targeting Social Media Giants for Harm to Children Dies in Legislature

This article is also about all the attempts legislators make at protecting children online that fail due to strong lobbying from social media giants.

In this Wall Street Journal article ( available free through this link), journalist intern Sarah Donaldson writes about how lawmakers killed a bill that would have allowed government lawyers to sue social-media companies for features that allegedly harm children by causing them to become addicted.

The bill, sponsored by Republican Jordan Cunningham, died in the appropriations committee of the California state senate through a process known as the suspense file. This process allows lawmakers to halt the progress of dozens or even hundreds of potentially controversial bills without a public vote, based on their possible fiscal impact.

Meta, Twitter and Snap had all individually lobbied against the measure, according to state lobbying disclosures.

“We’re glad to see that this bill won’t move forward in its current form. If it had, companies would have been punished for simply having a platform that kids can access,” said Dylan Hoffman, the executive director for California and the Southwest at tech-industry group Technet.

September 2022: California Passes a Minors Online Protection Law: the CAADC or CA Kids Code

California Gov. Gavin Newsom signed the California Age Appropriate Design Code Act into law, providing sweeping online protections for children under 18, September 15, 2022. Its nice to see children are back to those who are under 18 (as opposed to the federal Children’s Online Privacy Protection Act, which was applicable to the collection of personal information of those under 13).

The bipartisan legislation prohibits companies from leading children to provide their personal information online; using children’s personal information; collecting, selling or retaining children’s geolocation details; and profiling children. Before offering new online services that are likely to be accessed by kids, businesses will also be required to complete a data protection impact assessment to be provided to the attorney general.

Buffy Wicks (Democrat) and Jordan Cunningham (Republican) authored and championed this bipartisan Code for children in California which comes into effect in July of 2024.

A Children’s Data Protection Working Group will also be established, and is set to report to the legislature by January 2024 on how best to implement the new law, which goes into effect that year.

The bill was passed unanimously by the California Senate at the end of August. Companies that violate the new rules could face fines of up to $7,500 per affected child.

Debunking Three Myths about the CA Kids Code:

The Center for Humane Tech debunks three myths below. Over 90% of California voters support the CA Kids Code, despite tech platforms and trade groups who lobbied against the bill. These tech groups raised three main concerns about the bill that are worth clarifying.

- Myth #1: The bill applies to practically every website on the internet because it targets websites “likely to be accessed by kids” (anyone under 18).

The CA Kids Code applies to for-profit entities that either 1) make $25 million+ in annual gross revenue, 2) buy or sell the personal information of 100,000+ users, or 3) have at least 50% of annual revenue from selling or sharing consumers’ personal information. If a for-profit entity meets any of these three criteria, then the CA Kids Code will apply to the entity’s products or services whose users include a significant number of CA children and teens. These products and services will need to comply with the Code for all kids in CA.

- Myth #2: The bill forces websites to verify everyone’s ages, forcing companies to collect government IDs or conduct biometric scans to verify users’ identities.

The CA Kids Code asks platforms to reasonably estimate users’ ages by sorting users into likely age bands. It also specifies that platforms should use existing data to do so. (This method is not only possible, but platforms use age estimation to target advertising).

The CA Kids Code does not require age verification nor is it a likely outcome of the bill as there has been no age verification scheme in the UK following the UK’s AADC.

- Myth #3: The bill will set a dangerous precedent of states creating their own contradicting bills, making the internet unusable.

While it is possible that states may pass contradicting kids’ protection bills, it is more likely that we see some version of the “Brussels Effect” – either states will use the CA Kids Code as a model for their own, or tech platforms will apply the highest standard of care federally by default, as this will reduce operational burden.

February 2022: Proposed law in France against Overexposure to Screens:

The present bill is dedicated to the implementation of a public policy of prevention of risks related to digital screens for the youth. This will be done by adding digital addiction to addictions such as smoking or alcoholism in the public health code.

Article 1 provides for the establishment of a digital information platform for parents. It also provides for the development of appropriate measurement tools to better assess the risks induced by children’s exposure to screens.

A prevention policy relies, to a large extent, on professionals in contact with children.

Article 1 thus provides for the integration of specific modules on the risks linked to digital screens for young people into the training of health and medical/social professionals, as well as early childhood professionals. The aim is to raise awareness on this topic and to put them in a position to engage in a dialogue with parents.

In addition, Article 1 requires the addition of special mentions on the packaging of computers, tablets and cell phones in order to inform consumers of the dangers associated with overexposure to screens. In addition, it introduces prevention messages in all advertisements for these products.

Details of France’s Proposed Law against Overexposure to Screens:

Article 1 finally intends to limit the use of cell phones, tablets, laptops and similar devices in early childhood facilities and in nursery and elementary school. It requires these establishments to provide restrictive rules around this.

Article 2 provides for the inclusion of recommendations on the proper use of screens for young people in the pregnancy booklet, a document that is widely distributed to young parents.

Article 3 includes the policy of prevention of risks related to screens among the missions devolved to the President of the Departmental Council in his role of maternal and child protection.

Article 4 gives a central role to the departmental commissions for the care of young children, in order to collect and disseminate messages on the prevention of risks related to overexposure to screens, aimed at early childhood professionals but also at parents.

Finally, article 5 gives the territorial educational project an explicit role in the prevention of the overexposure of pupils to screens during extracurricular time.

Unfortunately, despite Pdt Macron’s promises, this law was never passed in France.

Nov. 2024: Australia Law Prevents Childre under 16 from Opening Social Media Accounts:

Australia recently passed a law to prevent children under 16 from creating accounts on social media platforms.

The bill, which the government calls a “world leading” move to protect young people online, was approved in the Senate on Thursday with support from both of the country’s major parties. The lower house of Parliament had passed it earlier in the week.

“This is about protecting young people — not punishing or isolating them,” said Michelle Rowland, Australia’s communications minister. She cited exposure to content about drug abuse, eating disorders and violence as some of the harms children can encounter online.

The legislation has broad support among Australians, and some parental groups have been vocal advocates. But it has faced backlash from an unlikely alliance of tech giants, human rights groups and social media experts.

What’s in the Australian Law?

The law requires social media platforms to take “reasonable steps” to verify the age of users and prohibit those under 16 from opening accounts.

It does not specify which platforms the ban will cover — that will be decided later — but the government has named TikTok, Facebook, Snapchat, Reddit, Instagram and X as sites it is likely to include.

Three broad categories of platforms will be exempt: messaging apps (like WhatsApp and Facebook’s Messenger Kids); gaming platforms; and services that provide educational content, including YouTube. Those 15 and under will also still be able to access platforms that let users see some content without registering for an account, like TikTok, Facebook and Reddit.

Our take on it is that its performative, similar to the conclusion of one observer. “The primary use of this legislation — let’s not pretend otherwise — is to make it look like our Parliament is taking a stand,” Annabel Crabb, a top journalist at Australia’s national broadcaster.